Last month Lex Toumbourou got a parking fine in Cairns. An AI chatbot got him out of it.

The bot whipped up a serviceable bit of official-ese to point out that Toumbourou actually had paid for the parking spot but accidentally got one digit of his car’s licence plate wrong.

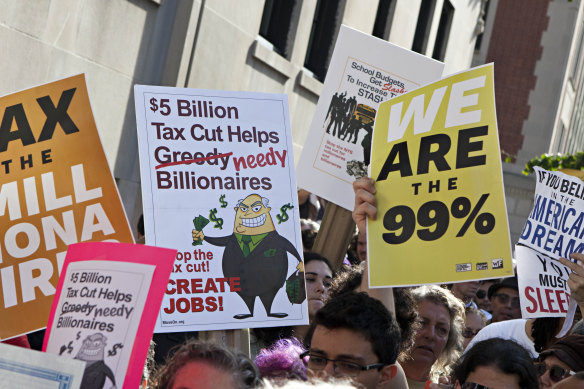

David Graeber, an anarchist anthropologist, examined how so many people were stuck in jobs they disliked when society produced enough to create extraordinary wealth of the kind that triggered the Occupy Wall Street protests (pictured).Credit:AAP

“You know, with these kind of admin emails, they all sort of follow a really particular format,” said Toumbourou. “No one’s reading them that closely.”

Whoever at the local council reads such claims clearly got the gist of his appeal and let him off the $66 fine.

The appealing and assessment of parking fines is the kind of process that the late radical anthropologist David Graeber had in mind when he wrote a 2013 screed against “bullsh-t jobs”: the kind of work that even their occupants privately concede don’t really need to exist.

Graeber created a whole typology to explain the roles that were eating up people’s time even as technology became ever more efficient. He called them “flunkies” (who exist to make their boss look good), “goons” (paid to cajole others into getting doing their employers’ bidding), “duct tapers” (papering over problems that could just be fixed), “taskmasters” (doing superfluous management) and lastly, “box tickers” (like the lawyer who Toumbourou may have had to engage to dispute a parking fine if the AI wasn’t there to do it).

Whatever the merits of Graeber’s theory, which is provocative, judgmental and subject to fierce dispute, might his classification give us a clue to the kind of jobs vulnerable to an AI revolution?

Here’s the case for a new wave of AI bots taking over at least some of these jobs. Unlike past systems that worked only for a narrow use (playing chess, answering queries they were specifically programmed to comprehend) the current generation have absorbed much of the material on the internet. It lets them draw links and provide answers to a huge range of queries and prompts by predicting what comes next.

As my colleague Liam Mannix wrote in his Examine newsletter, these bots have a key limitation: “[They] know which words come next without knowing what they mean.”

It is a ceiling that prevents bots creating truly new work or ideas, but that’s not the role of the people labouring in “bullsh-t jobs”.

Consider an entry-level public relations agent. This person’s job is to take a company announcement, perhaps of a new product, package it up with some background details and send it to journalists. All the information comes from the company. It’s the job of the PR person to package and contextualise, over and over. The same goes for someone who writes the text for firms’ ads that appear in Google searches. None of the information is their own. It’s perfect work for an AI bot.

Things might look bad for the “box tickers” and some “goons”, but a set of their peers are also vulnerable.

So things might look bad for the “box tickers” and some “goons”, but a set of their peers are also vulnerable. Gig economy firms such as Uber have figured out ways to do without a whole layer of management, assigning trips to rideshare drivers and assessing their performance largely via algorithm, so “taskmasters” could be under threat too.

More than a few companies respond to customer queries via AI bots that direct users back to already accessible FAQ pages, giving “duct tapers” a rival. “Flunkies” might be replaced with the swarms of fake accounts that a user can pay to bolster their reputation on social media with flattering posts.

Whether any of that is a good thing is deeply unclear. Indeed it might prompt a reassessment of whether such jobs are “bullsh-t” at all.

And there is a case for bullsh-t jobs persisting, or even proliferating because of AI. Toumbourou, who as the chief technology officer of an AI music start-up called Splash was perfectly placed to have the AI bot write his parking appeal, concedes it was a bit of a stunt. “I must admit it probably didn’t save me that much time,” he said. “I did it more just to see if I could.”

The reason using an AI bot didn’t save Toumbourou that much time is that he needed to tell it what to write and then fix up some issues with the text. In other words, it’s amazing that a bot can create something 80 per cent as good as a human. But in many situations, 80 per cent perfect is useless. A press release that is 80 per cent right could still mislead investors. Board minutes that capture only 80 per cent of decisions correctly would be a disaster.

And so one can imagine a future – at least for the medium term until the AI systems improve again – full of people who merely review, correct and disseminate the output of AI.

The Business Briefing newsletter delivers major stories, exclusive coverage and expert opinion. Sign up to get it every weekday morning.

Most Viewed in Technology

From our partners

Source: Read Full Article