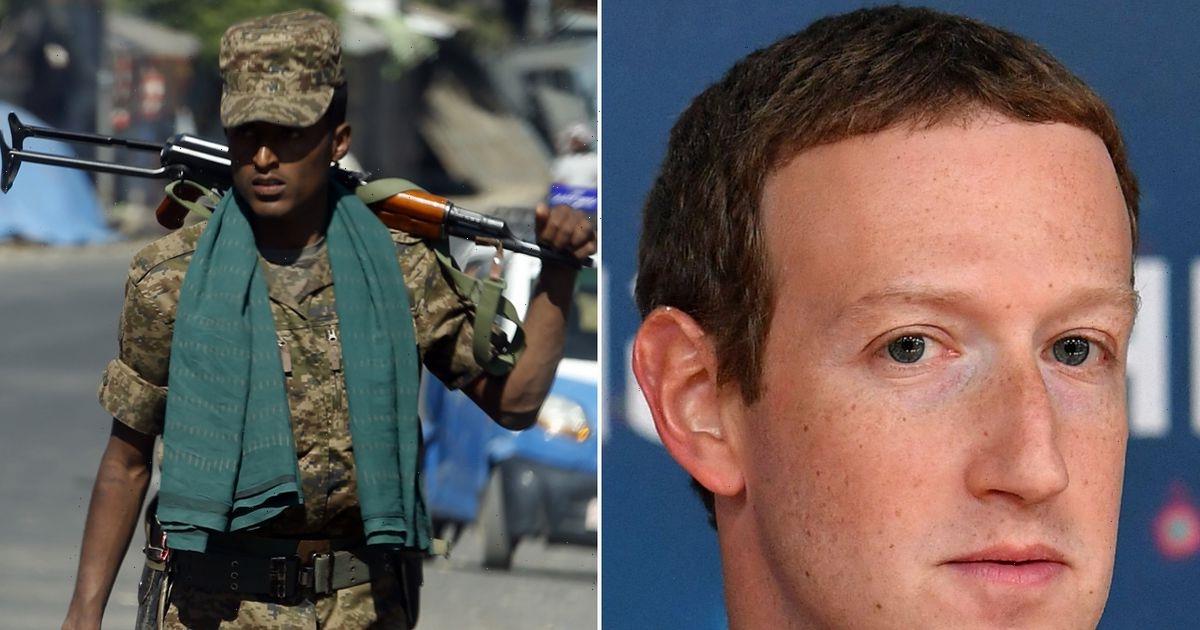

Mark Zuckerberg's company Meta is facing a massive $2bn (£1.6bn) legal case claiming its content algorithms helped fan the flames of a civil war in Ethiopia.

Over the last two years, the east African country has seen thousands of people die amid ethnic tensions and killings in the northern region of Tigray.

Meta is being sued by a group of researchers who claim that the violence was in part fuelled by hate speech, misinformation and violent content on Facebook and Instagram.

READ NEXT: Mark Zuckerberg's company hit with £228m fine after 553m users' data exposed

The social media giant is being accused of failing to train its algorithms to detect dangerous posts, and in not hiring enough staff to moderate content—claims Meta denies.

One of the people involved in the lawsuit, Abrham Meareg, has demanded an apology from Facebook after his father was shot and killed by armed men who followed him home on motorbikes.

His father, Professor Meareg Amare Abrha, was allegedly targeted by Facebook posts which 'slandered' the academic, used ethnic slurs against him, and revealed his address before his death.

Prof. Meareg's son claims that despite these posts being reported using Facebook's moderation tool, the platform "left these posts up until it was far too late".

He and other complainants are asking the Kenyan high court to force Meta to increase its moderation staff, demote violent content, and pay £1.6bn ($2bn) in funds to victims of violence 'incited on Facebook'.

-

Elon Musk loses spot as world's richest man as Twitter deal slashes net worth

In a court statement, Mr Meareg said: "If Facebook had just stopped the spread of hate and moderated posts properly, my father would still be alive". He also claimed that Facebook's algorithm promotes 'hateful and inciting content', and argued that Facebook's content moderation in Africa is 'woefully inadequate'.

However, a Meta spokesperson told the BBC that hate speech and violent content are against the platform's rules, and that it invests 'heavily' in moderation and tech to remove hateful content.

"Our safety-and-integrity work in Ethiopia is guided by feedback from local civil society organisations and international institutions."

They added: "We employ staff with local knowledge and expertise and continue to develop our capabilities to catch violating content in the most widely spoken languages in the country, including Amharic, Oromo, Somali and Tigrinya."

The firm also argues that it has taken steps to address harmful content in Ethiopia specifically, by reducing the 'virality' of posts, enhancing enforcement, and expanding its policies around violence and incitement.

READ MORE:

- How to activate your iPhone's hidden 'snow alert' system

- 'Self-healing' robot survives stabbing 'torture' and gets back up walking again

-

Tesla bigwigs urge Elon Musk to 'stop wasting time' posting memes on Twitter

-

Dangerous 'Grinch' Amazon scam is designed to steal Christmas

-

Woman fakes pregnancy to smuggle iPhones and tech across border using 'baby bump'

Source: Read Full Article