Facebook for the first time on Thursday disclosed numbers on the prevalence of hate speech on its platform, saying that out of every 10,000 content views in the third quarter, 10 to 11 included hate speech.

The world’s largest social media company, under scrutiny over its policing of abuses, particularly around November’s presidential election, released the estimate in its quarterly content moderation report.

On a call with reporters, Facebook’s head of safety and integrity Guy Rosen said that from March 1 to the Nov. 3 election, the company removed more than 265,000 pieces of content from Facebook and Instagram in the United States for violating its voter interference policies.

Facebook also said it took action on 22.1 million pieces of hate speech content in the third quarter, about 95 percent of which was proactively identified. It took action on 22.5 million in the previous quarter.

The company defines “taking action” as removing content, covering it with a warning, disabling accounts, or escalating it to external agencies.

Facebook’s photo-sharing site Instagram took action on 6.5 million pieces of hate speech content, up from 3.2 million in Q2. About 95 percent of this was proactively identified, a 10 percent increase from the previous quarter.

This summer, civil rights groups organized a widespread Facebook advertising boycott to try to pressure social media companies to act against hate speech.

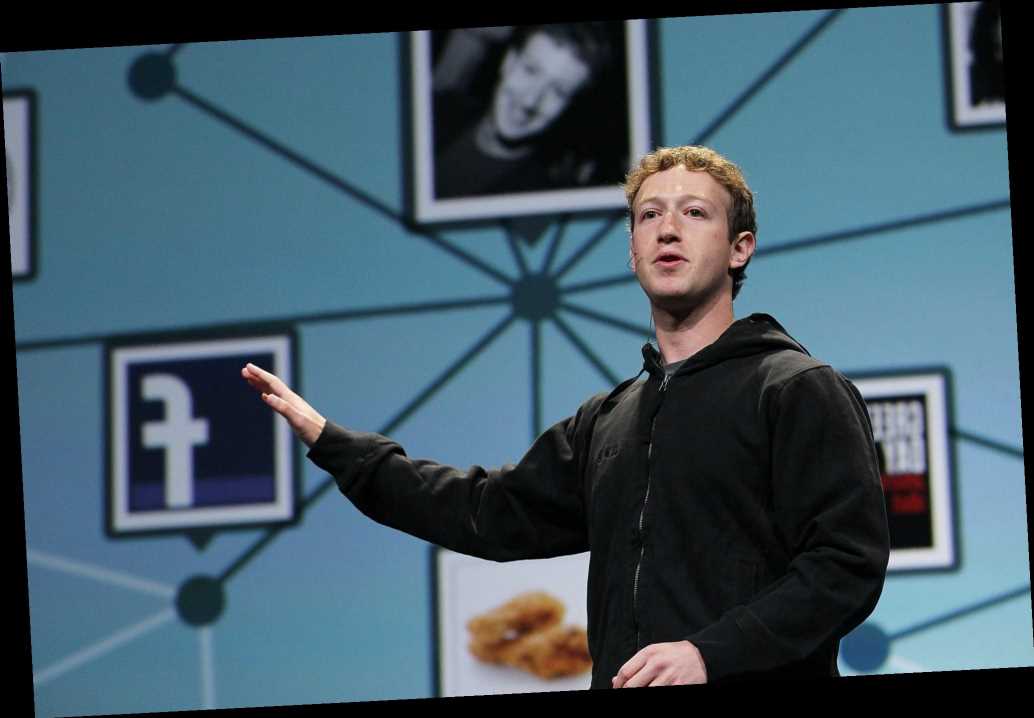

In October, Facebook said it was updating its hate speech policy to ban any content that denies or distorts the Holocaust, a turnaround from public comments Facebook’s Chief Executive Mark Zuckerberg had made about what should be allowed on the platform.

Facebook said it took action on 19.2 million pieces of violent and graphic content in the third quarter, up from 15 million in the second. On Instagram, it took action on 4.1 million pieces of violent and graphic content, up from 3.1 million in the second quarter.

Rosen said the company expected to have an independent audit of its content enforcement numbers “over the course of 2021.”

Earlier this week, Zuckerberg and Twitter CEO Jack Dorsey were grilled by Congress on their companies’ content moderation practices, from Republican allegations of political bias to decisions about violent speech.

Last week, Reuters reported that Zuckerberg told an all-staff meeting that former Trump White House adviser Steve Bannon had not violated enough of the company’s policies to justify suspension when he urged the beheading of two senior US officials.

The company has also been criticized in recent months for allowing rapidly-growing Facebook groups sharing false election claims and violent rhetoric to gain traction.

Facebook said its rates for finding rule-breaking content before users reported it were up in most areas, due to improvements in artificial intelligence tools and expanding its detection technologies to more languages.

In a blog post, Facebook said the COVID-19 pandemic continued to disrupt its content-review workforce, though it said some enforcement metrics were returning to pre-pandemic levels.

An open letter here from more than 200 Facebook content moderators published on Wednesday accused the company of forcing these workers back to the office and “needlessly risking” lives during the pandemic.

“The facilities meet or exceed the guidance on a safe workspace,” said Facebook’s Rosen on Thursday’s call.

Share this article:

Source: Read Full Article